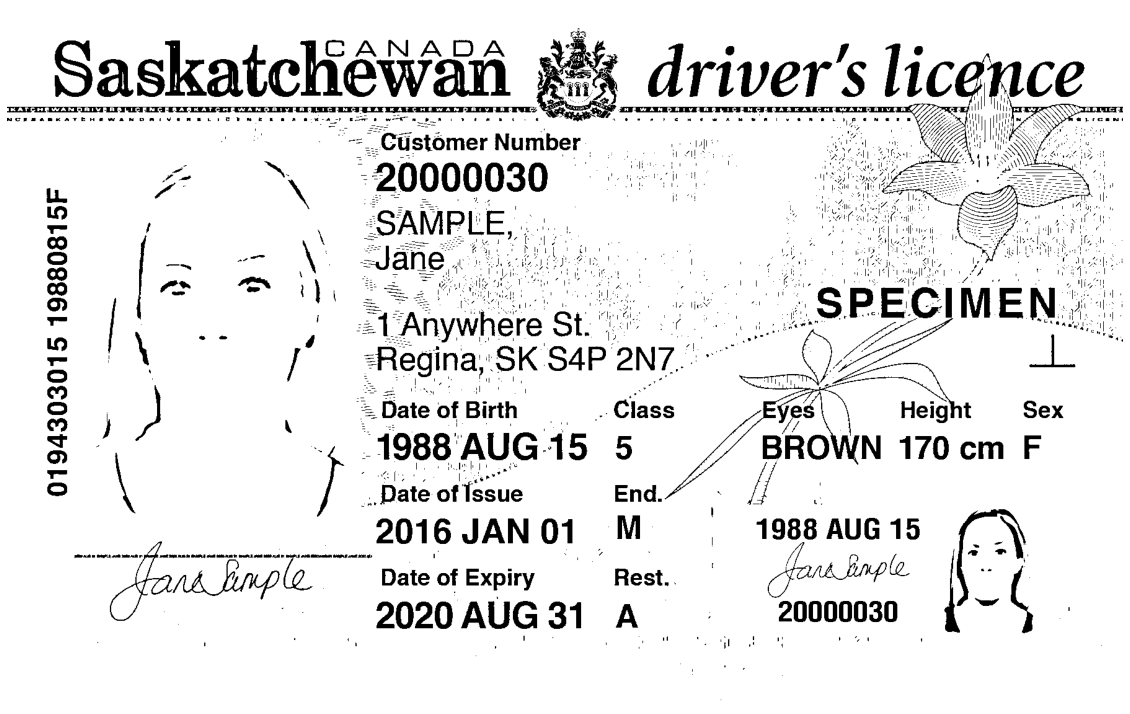

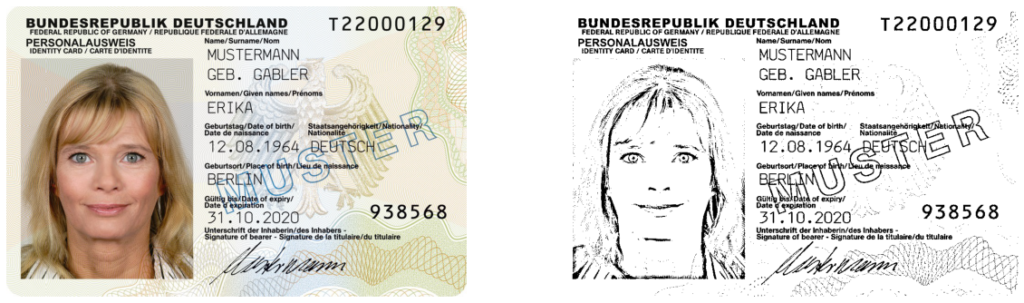

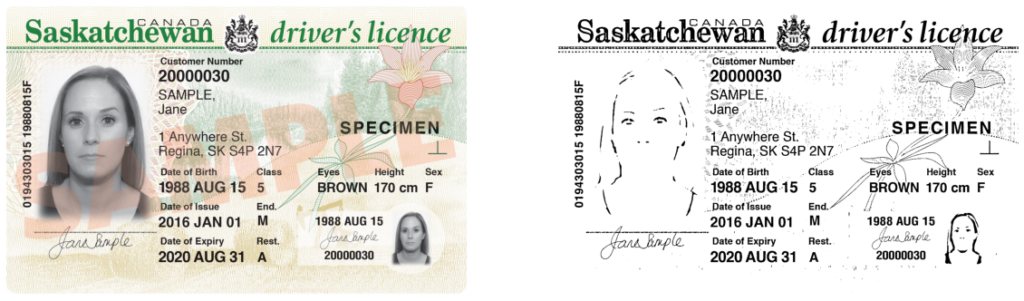

One of the important steps in OCR is the thresholding process. It helps us in separating the text regions (foreground) from the background. If you apply a thresholding algorithm like OTSU or Sauvola, you might end up with a lot of noise. Some of your text regions may even get categorized as background. Take a look at the below examples where I applied Sauvola algorithm directly on grayscale images without any pre-processing.

As you can see in the outputs, there is a lot of noise in the images and a few characters in the binary image are also vague. Passing this image directly into an OCR engine like Tesseract may not yield best results.

To overcome these issues related to noise and loss of text regions, I usually try to adjust gamma and remove noise before thresholding. The choice of algorithms for contrast enhancement and noise removal may differ based on the image type. If the image is of low resolution, noise removal may clean up some of your regions of interest. So, its important to use noise removal only when the image is of high resolution.

Here is my pre-processing code.

from skimage.color import rgb2gray

import matplotlib.pyplot as plt

from skimage.io import imread

from skimage.filters import threshold_sauvola

from skimage.exposure import is_low_contrast

from skimage.exposure import adjust_gamma

from skimage.restoration import denoise_tv_chambolle

cimage = imread('https://muthu.co/wp-content/uploads/2020/08/image9.jpg')

gamma_corrected = adjust_gamma(cimage, 1.2)

noise_removed = denoise_tv_chambolle(gamma_corrected, multichannel=True)

gry_img = rgb2gray(cimage)

th = threshold_sauvola(gry_img, 19)

bimage = gry_img > th

fig, ax = plt.subplots(ncols=2, figsize=(20,20))

ax[0].imshow(cimage)

ax[0].axis("off")

ax[1].imshow(bimage, cmap="gray")

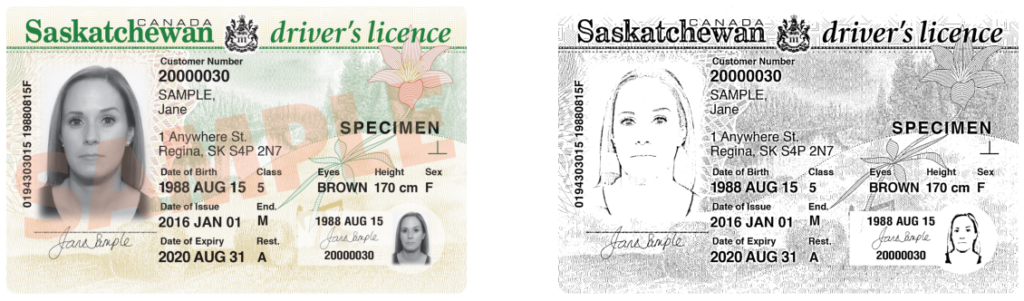

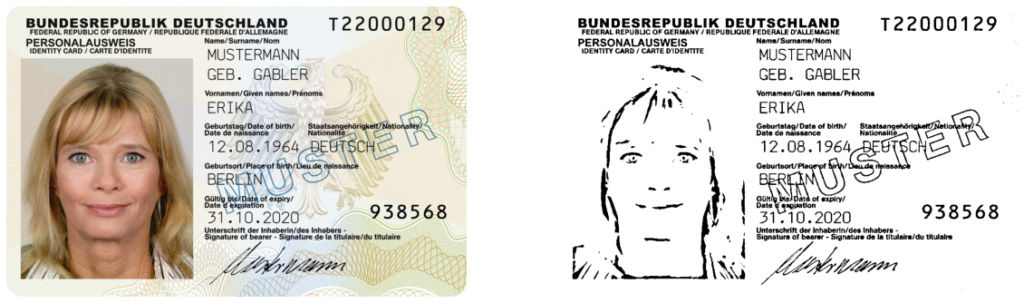

ax[1].axis("off")The outputs for the above sample images is as below:

What I am basically doing is enhancing the contrast first to darken text regions and then removing noise using the Total_variation_denoising method.